The goal

Inspired by Code Miko and the creative potential of Vtubing, the aim was to let anyone scan themselves and step into a shared virtual world as a true replica — not a stylized stand-in, but a photorealistic digital twin with live facial expression tracking.

Unreal Engine's Metahuman provided the ideal foundation: a fully rigged humanoid with swappable assets and native support for real-time iPhone facial animation via Live Link.

The problem

The target audience spanned a wide range — not just tech-savvy users. Any capture pipeline needed to be accurate enough to feel like you, while remaining simple enough for someone who has never touched 3D software. Cost also had to stay near zero during R&D.

Process

Metahuman Creator + PureRef overlays

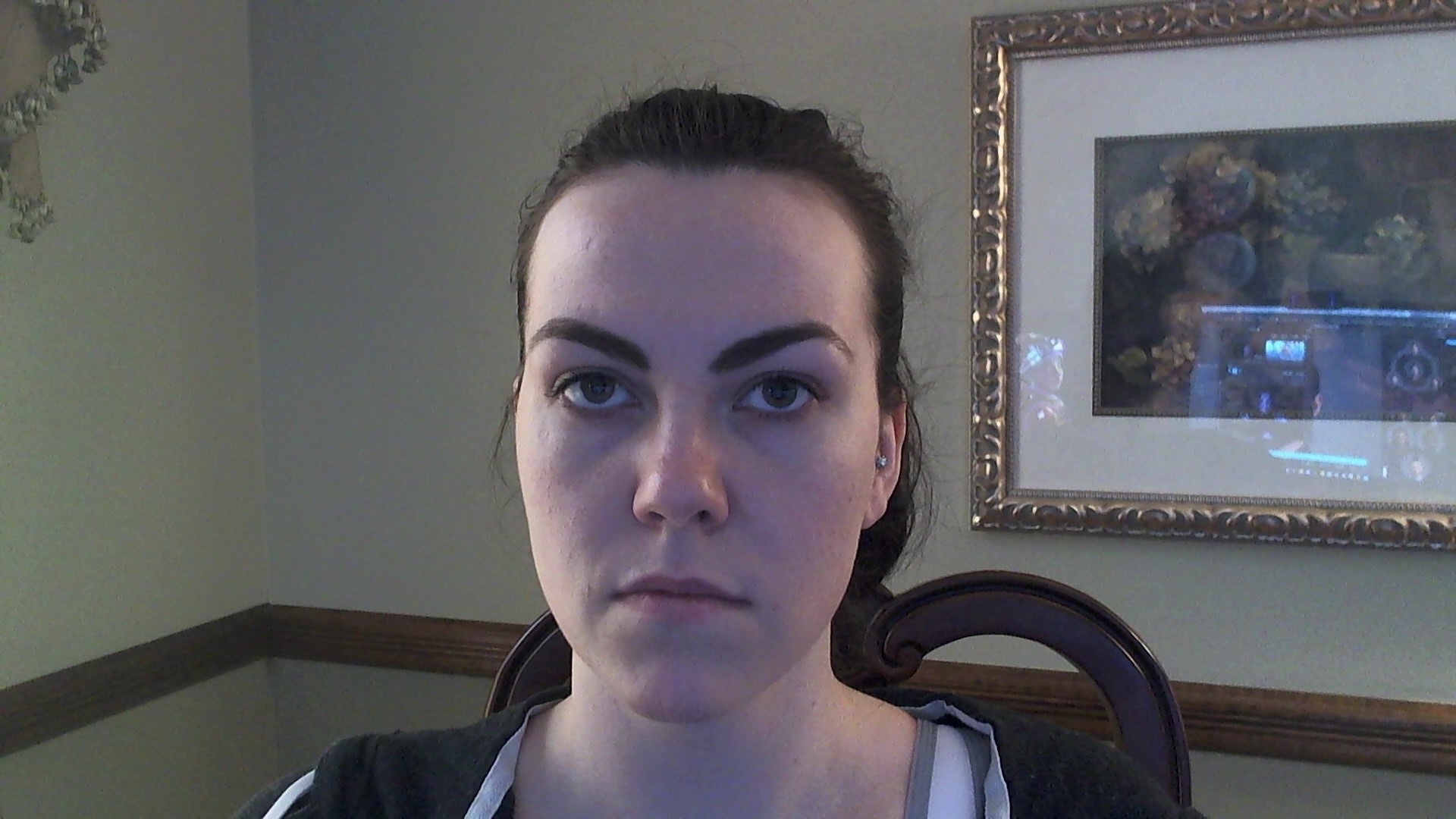

Overlaid reference photos using PureRef and manually sculpted facial features in Metahuman Creator. The approach proved too inaccurate and required users to pull all hair back from their face — a barrier for a general audience.

3DF Zephyr point cloud capture

Explored photogrammetry as a trending method for 3D capture. Tested with a plant on a lazy-susan (failed — no background anchor points), then a plaster bust (succeeded), then applied the method to a human subject. After multiple iterations, a clean OBJ mesh was produced and polished in Maya.

Mesh into Metahuman Creator

Imported the final OBJ into Metahuman Creator, which mapped the facial geometry onto a full humanoid rig. After tweaks, the result was a complete digital twin — ready for real-time facial animation via iPhone Live Link.

Key learnings

Outcome

Pipeline

Photogrammetry → OBJ → Metahuman

Live tracking

iPhone 12 Pro+ via Live Link

Status

Proof of concept validated

The pipeline proved that a low-budget photogrammetry workflow can produce a usable Metahuman avatar. The next phase would focus on simplifying the capture process for non-technical users — ideally reducing it to a single smartphone scan.